Learning of classifiers for the case of three-dimensional data has been investigated e.g., in.

For two-dimensional image data, the learning of classifies from examples is described e.g., in. Supervised learning is usually used for classification tasks and, in contrast to unsupervised learning, requires semantically labeled examples for training. More detailed information on this can be found in. The classification of data points into groups (also: clusters) is done by grouping elements that are as similar to possible to each other. For example, a cluster analysis can be used to segment a point-cloud into certain parts. One option for automated processing of point clouds with ML, e.g., for segmentation, is unsupervised learning. To avoid the time-consuming manual labeling process of 3D point clouds and thus to provide a tool for rapid generation of ML training data across many domains, we have developed the BLAINDER add-on, a programmatic AI extension of the open-source software Blender. Thus, the necessary labeling of training data ( labeling) must be done manually for specific applications. Although several such datasets for 3D point clouds exist, their scope is more limited than in the case of 2D camera images which hampers the transfer to custom domains.

Procedures of supervised learning require a large amount of training data. These are the classification of individual objects, the recognition of the components of objects ( part segmentation), and the semantic segmentation of several objects in a scene. Three questions are important with respect to such data. Various physical principles are used, such as electromagnetic waves (Radar), acoustic waves (Sonar) or laser beams (LiDAR).įor real-world applications, multiple temporally and spatially offset measurements are used to obtain a point-cloud representation of the environment. The time difference between emitting and receiving gives information about the distance covered.

( b) comprises the virtually measured point-cloud, while in ( c) the point-cloud is labeled for classification and segmentation tasks.Main steps of synthetic point cloudsĭepth-sensing is achieved by means of waves or rays that are sent out by a transmitter, reflected at surfaces and detected again by a receiver. In ( a) the mesh representation of a certain object ( Suzanne) is shown. In addition, we can automatically annotate rendered images for image classification tasks within this pipeline (see Section 4). In this paper, we propose an approach ( (accessed on 17 March 2021)) where virtual worlds with virtual depth sensors are used to generate labeled point clouds ( Figure 1c) based on 3D meshes ( Figure 1a). To train such classifiers, however, large amounts of training data are required that provide labeled examples of correct classifications. Artificial Intelligence (AI) approaches, often based on ML techniques, then can be used to understand the structure of the environment by providing a semantic segmentation of the 3D point-cloud, i.e., the detection and classification of the various objects in the scene.

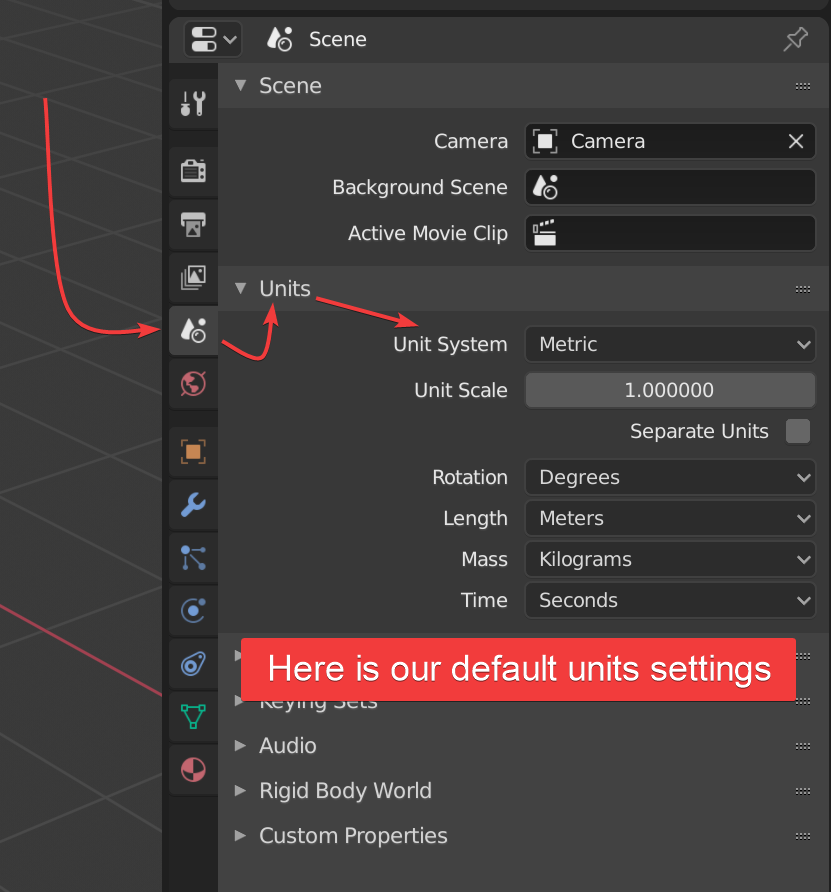

The output of such depth sensors is often used to build a 3D point-cloud representation of the environment. In addition, semantically labeled images can be exported using the rendering functionalities of Blender.ĭepth sensors have become ubiquitous in many application areas, e.g., robotics, driver assistance systems, geo modeling, and 3D scanning using smartphones. The semantically labeled data can be exported to various 2D and 3D formats and are thus optimized for different ML applications and visualizations. Within the BLAINDER add-on, different depth sensors can be loaded from presets, customized sensors can be implemented and different environmental conditions (e.g., influence of rain, dust) can be simulated. In this paper, we focus on classical depth-sensing techniques Light Detection and Ranging (LiDAR) and Sound Navigation and Ranging (Sonar). To simplify the training data generation process for a wide range of domains, we have developed the BLAINDER add-on package for the open-source 3D modeling software Blender, which enables a largely automated generation of semantically annotated point-cloud data in virtual 3D environments. However, for classification of depth sensor data, in contrast to image data, relatively few databases are publicly available and manual generation of semantically labeled 3D point clouds is an even more time-consuming task. Common Machine-Learning (ML) approaches for scene classification require a large amount of training data.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed